|

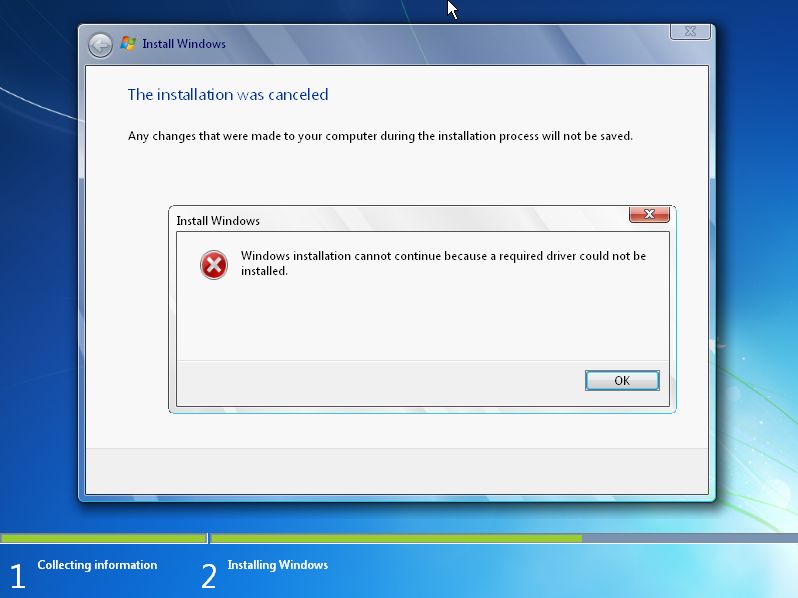

A non-descriptive Windows

7 error caused by malfunctioning drivers slipstreamed into an unattended

installation. |

Open Source Software vs. Commercial Software:

Migration from Windows to Linux

An IT Professional's Testimonial

Maintenance Headache of Windows

Dependencies on 3rd Parties

We've acknowledged that Microsoft likes to do things its own way, rather than sticking to standards. As such, Windows itself is controlled by Microsoft so vendors must write their own components and stick to the rules and regulations of Microsoft. As such, one problem that causes quite a few issues is the dependency of Windows on 3rd party software. Take for instance something that is at the core of any system, drivers. Drivers are components of code that tell the operating system how to run devices and certain functions. Microsoft attempts to provide drivers for many common devices out of the box for Windows. This will at least get you a working system that boots, but often times for optimal performance you will need to obtain drivers from the device manufacturer and install them. This sounds all fine and dandy, right? Well, serious problem arise when 3rd party drivers are unstable, they will cause Windows to malfunction, or even in the worst case lock up completely. And rightfully so. Microsoft can in no way test every single driver that is released by 3rd parties. Yes, Microsoft has founded its own driver signing infrastructure that tries to warn users if they are about to install an untested driver. This was actually a great idea on Microsoft's behalf. But, it still has much to be desired, as it requires 3rd parties to come forward and get their drivers certified by Microsoft. So, as you can imagine, not all vendors are going to comply for whatever reasons they have.

|

No only is it difficult to get vendors to all contribute clean drivers, but even when they do there are many complications that can arise because you have hundreds or even thousands of 3rd parties all contributing their own products into a huge mixing bowl. Installation problems can become commonplace because of this. For instance, Microsoft allows drivers to be slipstreamed (included so that they are automatically installed) in to Windows installations. Microsoft calls this the "unattended" installation. Up front, this sound great, right? I mean, who wouldn't love an automatic install of Windows so that all of the drivers are installed automatically? Ironically, this is exactly what Linux does because the kernel is pre-compiled with all drivers linked to it. But with Windows, Microsoft can't possibly compile every vendor's 3rd party driver into the mainstream Windows distribution. So, slipstreaming allows customers to make their own Windows installation to suite their needs. This is commonly used for companies that deploy many machines of the same type to create a homogeneous environment of computers. Unfortunately, about 25% of the time I have seen the Windows installation blow when slipstreaming drivers into the installation, even in Windows 7, the latest version of Windows. It can quickly become a very big headache to try and work around driver installation issues, too. The error typically gives no useful information to help find out which driver is the cause of problems. Again, the Linux kernel is free of these issues because every single driver is compiled to the kernel, either directly or as a module. This totally avoids all of the issues above from multiple 3rd parties all trying to combine their contributions into a huge mixing bowl.

In Linux you have the kernel, which is the code that runs on the system and controls everything that runs on the system, including drivers. But the difference here is that the drivers are often developed by the same community that writes sections of the kernel itself, or other contributors of the open source community. There are also some commercially (3rd party) released drivers as well. But, since these 3rd party drivers are so few and far between and the open source community is so vast, they can easily and rigorously be tested for defects. Overall though, unlike Windows where there is dependency on a 3rd party, the Linux drivers are optimized specifically and included as modules of the Linux kernel. With this model, you essentially eliminate 3rd parties having to write their own drivers and submit them. And, with the vastness of the open source community, testing of the device drivers is very thorough. The Linux kernel is very efficient, so even if a driver is at fault or malfunctions, the kernel will keep running and the bad driver can usually be "removed". This is because the kernel is very modular, so code for drivers and funtionality can be inserted and removed while the kernel is actively running. Fortunately I have never had any experiences with bad drivers in Linux, so I do not have a lot of feedback on having to remove modules for them from a running kernel.

Another point on not having to rely on 3rd parties for device drivers and other software is compatibility. I think we've all had many instances where we have upgraded from one version of Windows to another, and all of a sudden we find that there are no drivers for our device! This has been a problem for years, specifically from Windows 95/98/ME to Windows 2000/XP because of the entire restructuring of Windows between those versions. Unfortunately, when you discover that a device is no longer supported, about the only option is to sell or get rid of your device to somebody that uses a compatible version of Windows that has a supported driver. Fortunately, this is not the case in Linux since the kernel and its drivers are backwards compatible. What does this mean? It means that support for even the oldest of devices is retained and kept in kernel and its modules, even in the latest versions. So even for an old scanner or whatever device you may have, if Linux supported it in the past, it will still support it in the current kernel.

But wait, there is more. Even service pack releases by Microsoft may need intervention and support from 3rd parties, which is totally optional from the standpoint of the 3rd parties themselves. The problem with service packs is that they are patches to the operating system, and are sometimes mandatory upgrades released by Microsoft. This means that users can be potentially stuck with a broken operating system from a recently installed service pack, and may not ever get a solution for their problem if the 3rd party cannot respond with a fix! This is a huge deal and a real deal, and can leave the consumer with only one option of buying new hardware or software that will be guaranteed work with the service pack. The other option is to skip the service pack or uninstall it, which can get messy as Microsoft continues to try and push it to install on the computer, and may even stop supporting versions that don't have the service pack installed. Obviously this is the worst case scenario, but unfortunately this has become a very common issue for those using Microsoft products. For instance, Microsoft released Service Pack 1 for Windows Vista in hopes of fixing a plethora of problems with the initial software release. While this service pack was a desperate attempt by Microsoft to fix a lot of issues, it also introduced a list of new issues. Microsoft then took responsibility and tried to convince as many 3rd parties that supply their own device drivers and software to release their own upgrades that would work with the service pack. Unfortunately, there is nothing that can force the 3rd parties to actually go through and release their own updates to address the issues. Many customers have been left stranded with hardware devices and installed software that simply stopped working, without a solution other than buying new devices or software.

This is a huge deal to many computer customers and can implement high costs of having to buy new hardware and software when you don't need to. Sure, this can cost individuals using computers at home, but what about companies that have handfuls or even hundreds or thousands of computers, devices, and software? As you can imagine, things can get very messy and this can put a lot of stress not only financially on a company, but physically as well on its employees. So, in result these costs have the potential to skyrocket in all sorts of scenarios. Now, let's examine the fact that Linux does not have these compatibility problems since it is VERY backwards compatible with hardware and software. Old hardware will work today with the latest version of Linux, and will continue to work in future versions of Linux without issues. I will go into more detail on this subject further down.

Backwards Compatibility

As I mentioned previously, we all know that over time that software and hardware become out of date. This is just a fact. But, if you look closely and pay attention, you will notice that Microsoft has a way of forcing its consumers into upgrading by stating an end of life date for its older versions of software. And, if you think about it, Microsoft is going to make money when people upgrade, with sales of the new version. So, this is a good thing for Microsoft, but not such a good thing for the consumers.

The problem with migrating from one version of Windows to another is the fact that each version is somewhat heterogeneous, meaning completely different. There is no guarantee that devices and programs that run in one version of Windows will work in another version. This can cause a snafu if the manufacturer of the device has not provided a driver for each version. Since each version of Windows is different, each version often times requires specific hardware drivers for the respective version. Linux is much more homogeneous in the fact that each version is somewhat similar. Newer versions of a Linux distribution will always include a newer kernel, and often times will simply include newer versions of software that was already included in the previous version(s). Sometimes new programs will be introduced in newer versions of a distribution, but very very seldom will a program be dropped without a solid replacement in place. If one is dropped or slated to be dropped in a future version, a replacement with the same functionality will be provided to take its place. Overall you can bet that your hardware devices will work as well as your programs when you migrate to a newer version of a Linux distribution. Unfortunately, doing an in place upgrade (installing over the top of your current working installation) of Linux from one version or distribution release to another is not usually a smooth ride though. Both Linux and Windows can provide a bumpy ride when doing an in place upgrade. It is usually recommended to do a fresh new installation, which is a cleaner method and will produce great results, even though this method is more time consuming than doing an in place upgrade as all software settings are lost. In contrast, Microsoft does provide a well thought-out path when upgrading over the top of a working Windows installation. Obviously this is done so that people will be more tempted to buy the new version and not worry about upgrading. However, even though the installation process is somewhat streamlined, hardware compatibility is not always guaranteed and can cause major problems.

On the software side, Linux is also extremely well at retaining backwards compatibility for applications. Many users of Windows experienced headaches when they upgraded from Windows 95/98/ME to Windows 2000/XP/Vista, when many older applications (especially those written for DOS -- Microsoft's non-GUI based operating system) would flat out not work anymore. Microsoft attempted to retain compatibility by allowing some DOS applications to run, but it was (and still is) far from a complete solution. Starting in Windows XP Microsoft also added compatibility modes for Windows 95 and 98 which helped but didn't provide a complete blanket solution, although I have seen high success rates in running older Windows 95/98-based software on Windows XP without needing compatibility mode. Luckily, some talented programmers invented DOS emulators that run in Windows, which essentially emulate DOS itself so that older applications can be used in Windows. One of the most popular programs like this is DosBox, which is open source. But, you can at least rest assured in Linux that you should be able to run older applications in newer versions of Linux (except for very rare cases where there is a missing dependency in the newer version of Linux). This is not only due to the fact that the kernel is very backwards compatible itself, but the environments that the applications were meant to run in are still apparent in even the latest Linux distributions. Take for example an astronomy program that I use, called Xephem. It was first written in 1991 by Elwood Downey, and uses Motif in the X Window system. Since both X Windows and Motif are still supported 100% today, this application still runs as good as ever on my computer running the latest version of Fedora Linux 10. Unix/Linux retain their core components over time, and build upon them. This is in high contrast to Windows where old core components are thrown out.

The bad news about Windows is that Microsoft controls the source code, so it is in charge of releasing patches. This means that if you are still running a copy of Windows 98 and a security hole is found that affects it, you can do nothing about fixing it. You are stuck with Windows 98 the way it is, because Microsoft has dropped all support and patches for it. Running an operating system that is 10 years old is not good practice, but for some it is feasible if the computer cannot be upgraded to newer hardware, finances are tight, etc. Why try to fix something that isn't broken, right? Well, luckily with the open source nature of Linux, the chance of running an older version of Linux is much greater. This is all due to the power of open source, access to the source code. In theory, you could run a system using Red Hat 4.2 on a computer and write your own patches. I wouldn't recommend this to anybody, however, it probably wouldn't be worth the time and effort. But, I have installed newer applications on Linux installations that are 8 years old and they have worked well. It usually requires compiling the binary applications from the source code, which sounds impossible, but in reality it is not. It can be done, with a little time and effort.

A good example that I ran across recently of Microsoft not providing backwards compatibility even in one of its own products is Microsoft Live Meeting 2005. For those that have used this product, you know that this is a very good program for hosting online meetings or training sessions. Microsoft hosts online services for this as well, allowing companies to use it without the overhead of maintaining a server. While Live Meeting 2005 was predominant, the hosted services at Microsoft supported this version. Until Live Meeting 2007 came out. Eventually, Microsoft started migrating the accounts for their online services to work with 2007, but failed to keep any backwards compatibility for 2005. Once an account was migrated from 2005 to 2007 mode, users were forced to install the 2007 version of Live Meeting in order to continue using the service. The only alternative to this was that Microsoft also offers a Java version of the Live Meeting client, so this was a workaround at least. But, the presenter needed the full client installed, and was forced to upgrade in order to continue using the service. For many companies, this provided a large headache having to install Live Meeting 2007 on each presenter's computer (and attendee's computers if they needed the full client). Fortunately, Microsoft does offer the Live Meeting full client version for free.

Next Section : Maintenance Headache of Windows:Software

Management,Supporting Documentation,Supporting Software ![]()

![]() Previous Section: Maintenance Headache of Windows

Previous Section: Maintenance Headache of Windows

Click Here to Continue reading on making the actual migration.

References

1. Microsoft.com Article: "Don't get busted: Get a business license"

2. Wikipedia : The GNU General Public License